SmokeyDope

- 4 Posts

- 23 Comments

2·1 day ago

2·1 day agowhy? I run it.

Mmm how to say this. i suppose what I’m getting at is like a philosophy of development and known behaviors of corporate products.

So, here’s what I understand about crowdsec. Its essentially like a centralized collection of continuously updated iptable rules and botscanning detectors that clients install locally.

In a way its crowd sourcing is like a centralized mesh network each client is a scanner node which phones home threat data to the corporate home which updates that.

Notice the optimal word, centralized. The company owns that central home and its their proprietary black box to do what they want with. And so you know what for profit companies like to do to their services over time? Enshittify them by

-

adding subscription tier price models

-

putting once free features behind paywalls,

-

change data sharing requirements as a condition for free access

-

restricting free api access tighter and tighter to encourage paid tiers,

-

making paid tiers cost more to do less.

-

Intentionally ruining features in one service to drive power users to use a different.

They can and do use these tactics to drive up profit or reduce overhead once a critical mass has been reached. I do not expect alturism and respect for usersfrom corporations, I expect bean counters using alturism as a vehicle to attract users in the growing phase and then flip the switch in their tos to go full penny pinching once they’re too big to fail.

Crowdsecs pricing updates from last year

CrowdSec updated pricing policy

Hi everyone,

Our former pricing model led to some incomprehensions and was sub-optimal for some use-cases.

We remade it entirely here. As a quick note, in the former model, one never had to pay $2.5K to get premium blocklists. This was Support for Enterprise, which we poorly explained. Premium blocklists were and are still available from the premium SaaS plan, accessible directly from the SaaS console.

Here are the updates:

Security Engine: All its embedded features (IDS, IPS and WAF) were, are and will remain free.

SAAS: The free plan offers up to three silver-grade blocklists (on top of receiving IP related to signals your security engines share). Premium plans can use any free, premium and gold-grade blocklists. Previously, we had a premium and an enterprise plan with more features. All features are now merged into a unique SaaS enterprise plan. The one starting at $31/month. As before, those are available directly from the SaaS console page: https://app.crowdsec.net/

SUPPORT: The $2.5K (which were mostly support for Enterprise) are now becoming optional. Instead, a client can contract $1K for Emergency bug & security fixes and $1K for support if they want to.

BLOCKLISTS: Very specific (country targeted, industry targeted, stack targeted, etc.) or AI-enhanced are now nested in a different offer named “Platinum blocklists subscription”. You can subscribe to them, regardless of whether you use the FOSS Security Engine or not. They can be joined, tuned, and injected directly into most firewalls with regular automatic remote updates of their content. As long as you do not resell them (meaning you are the final client), you can use the subscription in any part of your company.

CTI DATA: They can be consumed through API keys with associated quotas. These are affordable and intended for use in tools like OpenCTI, MISP, The Hive, Xsoar, etc. Costs are in the range of hundreds of dollars per month. The Full CTI database can also be locally replicated at your place and constantly synced for deltas. Those are the largest plans we have, and they are usually destined to L/XL enterprises, governmental bodies, OEM & hardware vendors.

Safer together.

14

·

14

Comments Section

u/ShroomShroomBeepBeep avatar

ShroomShroomBeepBeep

•

1y agoWhilst I’m pleased to see it made clearer, £290 a year for each security engine is still far too expensive for me to consider it.

2

u/GuitarEven avatar

GuitarEven

•

1y agoWe get that £290 is too high for individual home labs. Those offers are made for companies.

Free tier features should cover homelabs correctly.Features that are oriented for enterprise clients.

If a company cannot invest $300 yearly in its security, no judgment and the free tier will still be very helpful until it recovers some budget margins to strengthen its security posture.

4

[deleted]

•

1y agoAny idea why we dont have any good free / freemium (max $5 per month) app yet. Reason am asking - adguard, urigin etc had filters which matches js/domains and filters them out. Same logic can be applied atleast for the ip lists - so that these ips cann be added to iptables to block. A lot of things are easy to make. The tough ones are things like scenarios and may be ssh bw etc. I wonder why no real competition.

1

u/GuitarEven avatar

GuitarEven

•

1y agohi u/ElizabethThomas44

Well you actually do. To date, for free, you get:

- the security engine (IDS/IPS/WAF)

- all scenarios

- the blocklist of IPs you are participating to detect when you use scenarios and share signals

- the free tier of the console

The IPs you automatically get for free are already added to your nftables or iptables using the related remediation component.

<TL/DR> You already have it.

(damn, personal reddit account, sorry, this is Philippe@CrowdSec)

4At the end of the day its not the thousands of anonymous users contributing their logs or Foss voulenteers on git getting a quarterly payout. They’re the product and free compute + live action pen testing ginnea pigs, no matter what PR they spin saying how much they care about the security of the plebs using their network for free.

Its always about maximizing the money with these people your security can get fucked if they dont get some use out of you. Expect at some point the tos will change so that anonymized data sharing is no longer an option for free tier.

What happens if the company goes bankrupt? Does it just stop working when their central servers shut down? Does their open source security have the possibility of being forked and run from local servers?

It doesnt have to be like this. Peer to peer Decentralized mesh networks like YaCy already show its possible for a crowdsourced network of users can all contribute to an open database. Something that can be completely run as a local Node which federates and updates the information in global node. Something like it that updates a global iptables is already a step in the right direction. In that theoretical system there is no central monopoly its like the fediverse everyone contributes to hosting the global network as a mesh which altruistic hobbyist can contribute free compute to on their own terms.

https://github.com/yacy/yacy_search_server

I"I dont see anything wrong with people getting paid" is something I see often on discussions. Theres nothing wrong with people who do work and make contributions getting paid. What’s wrong is it isnt the open source community on github or the users contributing their precious data getting paid, its a for profit centralized monopoly that controls access to the network which the open source community built for free out of alturism.

The pattern is nearly always the same. The thing that once worked well and which you relied on gets slowly worse each ToS update, while their pricing inches just a dollar higher each quarter, and you get less and less control over how you get to use their product. Its pattern recognition.

The only solution is to cut the head off the snake. If I can’t fully host all of the components, see the source code of the mechanisms at all layers, own a local copy of the global database, then its not really mine.

Again, it’s a philosophy thing. Its very easy to look at all that, shrug, and go “whatever not my problem I’ll just switch If it becomes an issue”. But the problem festers the longer its ignored or enabled for convinence. The community needs to truly own the services they run on every level, it has to be open, and for profit bean counters can’t be part of the equation especially for hosting. There are homelab hobbyist out there who will happily eat cents on a electric bill to serve an open service to a community, get 10,000 of them on a truly open source decentralized mesh network and you can accomplish great things without fear of being the product.

-

10·1 day ago

10·1 day agoIf crowdsec works for you thats great but also its a corporate product whos premium sub tier starts at 900$/month not exactly a pure self hosted solution.

I’m not a hypernerd, still figuring all this out among the myriad of possible solutions with different complexity and setup times. All the self hosters in my internet circle started adopting anubis so I wanted to try it. Anubis was relatively plug and play with prebuilt packages and great install guide documentation.

Allow me to expand on the problem I was having. It wasnt just that I was getting a knock or two, its that I was getting 40 knocks every few seconds scraping every page and searching for a bunch that didnt exist that would allow exploit points in unsecured production vps systems.

On a computational level the constant network activity of bytes from webpage, zip files and images downloaded from scrapers pollutes traffic. Anubis stops this by trapping them in a landing page that transmits very little information from the server side. By traping the bot in an Anubis page which spams that 40 times on a single open connection before it gives up, it reduces overall network activity/ data transfered which is often billed as a metered thing as well as the logs.

And this isnt all or nothing. You don’t have to pester all your visitors, only those with sketchy clients. Anubis uses a weighted priority which grades how legit a browser client is. Most regular connections get through without triggering, weird connections get various grades of checks by how sketchy they are. Some checks dont require proof of work or JavaScript.

On a psychological level it gives me a bit of relief knowing that the bots are getting properly sinkholed and I’m punishing/wasting the compute of some asshole trying to find exploits my system to expand their botnet. And a bit of pride knowing I did this myself on my own hardware without having to cop out to a corporate product.

Its nice that people of different skill levels and philosophies have options to work with. One tool can often complement another too. Anubis worked for what I wanted, filtering out bots from wasting network bandwith and giving me peace of mind where before I had no protection. All while not being noticeable for most people because I have the ability to configure it to not heckle every client every 5 minutes like some sites want to do.

37·2 days ago

37·2 days agoSomething that hasn’t been mentioned much in discussions about Anubis is that it has a graded tier system of how sketchy a client is and changing the kind of challenge based on a a weighted priority system.

The default bot policies it comes with has it so squeaky clean regular clients are passed through, then only slightly weighted clients/IPs get the metarefresh, then its when you get to moderate-suspicion level that JavaScript Proof of Work kicks. The bot policy and weight triggers for these levels, challenge action, and duration of clients validity are all configurable.

It seems to me that the sites who heavy hand the proof of work for every client with validity that only last every 5 minutes are the ones who are giving Anubis a bad wrap. The default bot policy settings Anubis comes with dont trigger PoW on the regular Firefox android clients ive tried including hardened ironfox. meanwhile other sites show the finger wag every connection no matter what.

Its understandable why some choose strict policies but they give the impression this is the only way it should be done which Is overkill. I’m glad theres config options to mitigate impact normal user experience.

3·2 days ago

3·2 days agoWhat use cases does perplexity do that Claude doesn’t for you?

62·2 days ago

62·2 days agoTheres a compute option that doesnt require javascript. The responsibility lays on site owners to properly configure IMO, though you can make the argument its not default I guess.

https://anubis.techaro.lol/docs/admin/configuration/challenges/metarefresh

From docs on Meta Refresh Method

Meta Refresh (No JavaScript)

The

metarefreshchallenge sends a browser a much simpler challenge that makes it refresh the page after a set period of time. This enables clients to pass challenges without executing JavaScript.To use it in your Anubis configuration:

# Generic catchall rule - name: generic-browser user_agent_regex: >- Mozilla|Opera action: CHALLENGE challenge: difficulty: 1 # Number of seconds to wait before refreshing the page algorithm: metarefresh # Specify a non-JS challenge methodThis is not enabled by default while this method is tested and its false positive rate is ascertained. Many modern scrapers use headless Google Chrome, so this will have a much higher false positive rate.

6·2 days ago

6·2 days agoSecurity issues are always a concern the question is how much. Looking at it they seem to at most be ways to circumvent the Anubis redirect system to get to your page using very specific exploits. These are marked as m low to moderate priority and I do not see anything that implies like system level access which is the big concern. Obviously do what you feel is best but IMO its not worth sweating about. Nice thing about open source projects is that anyone can look through and fix, if this gets more popular you can expect bug bounties and professional pen testing submissions.

28·2 days ago

28·2 days agoYou know the thing is that they know the character is a problem/annoyance, thats how they grease the wheel on selling subscription access to a commecial version with different branding.

https://anubis.techaro.lol/docs/admin/botstopper/

pricing from site

Commercial support and an unbranded version

If you want to use Anubis but organizational policies prevent you from using the branding that the open source project ships, we offer a commercial version of Anubis named BotStopper. BotStopper builds off of the open source core of Anubis and offers organizations more control over the branding, including but not limited to:

- Custom images for different states of the challenge process (in process, success, failure)

- Custom CSS and fonts

- Custom titles for the challenge and error pages

- “Anubis” replaced with “BotStopper” across the UI

- A private bug tracker for issues

In the near future this will expand to:

- A private challenge implementation that does advanced fingerprinting to check if the client is a genuine browser or not

- Advanced fingerprinting via Thoth-based advanced checks

In order to sign up for BotStopper, please do one of the following:

- Sign up on GitHub Sponsors at the $50 per month tier or higher

- Email [email protected] with your requirements for invoicing, please note that custom invoicing will cost more than using GitHub Sponsors for understandable overhead reasons

I have to respect the play tbh its clever. Absolutely the kind of greasy shit play that Julian from the trailer park boys would do if he were an open source developer.

19·8 days ago

19·8 days agoIts food man. It belongs if you like it, it doesn’t if you don’t. I don’t understand some of these arguments where “the originating culture did it this way so it belongs!” Who cares how people half way across the world make their burritos, fuck culture and tradition. Cooking is art, do what you want and make it how you like. Personally I put rice in because its an easy nutritious filler that doesnt impact taste or texture too bad and which extends the good meats and seasoning and stuff out a couple meals.

Did you write this, genuinely? It is pure poetry such that even Samurai would go “hhhOooOOooo!”.

Yes I did! I spent a lot of time cooking an axiomatic framework for myself on this stuff so im happy to have the opportunity to distill my current ideas for others. Thanks! :)

And it is so interesting, because, what you are talking about sounds a lot like computational constraints of the medium performing the computation. We know there are limits in the Universe. There is a hard limit on the types of information we can and cannot reach. Only adds fuel to the fire for hypotheses such as the holographic Universe, or simulation theory.

The planck length and planck constant are both ultimate computational constraints on physical interactions, with planck length being the smallest meaningful scale, planck time being the smallest meaningful interval (universal framerate), and plancks constant being both combined to tell us about limits to how fast things can compute at the smallest meaningful distance steps of interaction, and ultimate bounds on how much energy we can put into physical computing.

Theres an important insight to be had here which ill share with you. Currently when people think of computation they think of digital computer transistors, turing machines, qbits, and mathematical calculations. The picture of our universe being run on an aliens harddrive or some shit like that because thats where were atas a society culturally and technologically.

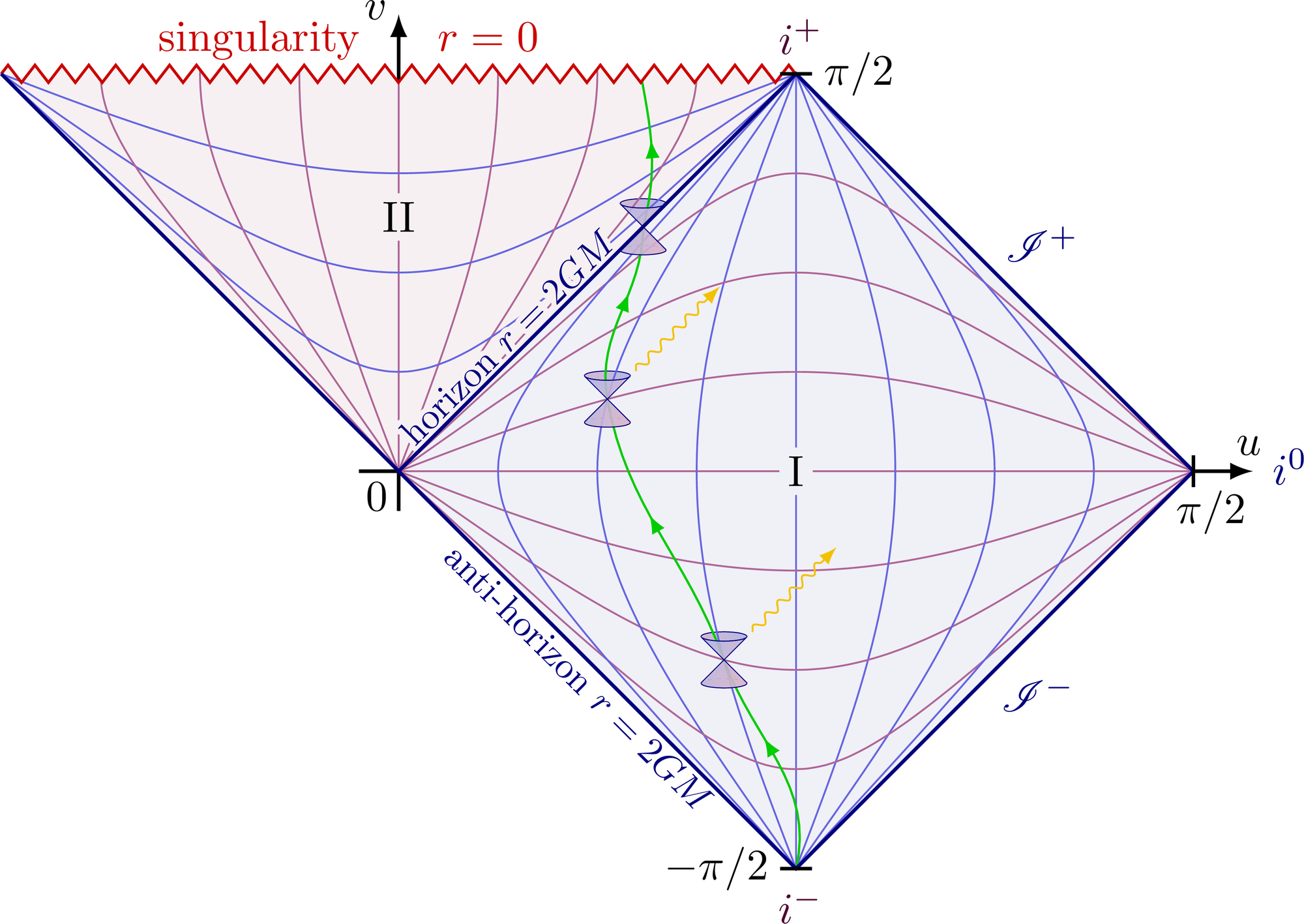

Calculation is not this, or at least its not just that. A calculation is any operation that actualizes/changes a single bit in a representational systems current microstate causing it to evolve. A transistor flipping a bit is a calculation. A photon interacting with a detector to collapse its superposition is a calculation. The sun computes the distribution of electromagnetic waves and charged particles ejected. Hawking radiation/virtual particles compute a distinct particle from all possible ones that could be formed near the the event horizon.

The neurons in my brain firing to select the next word in this sentence sequence from the probabilistic sea of all things I could possibly say, is a calculation. A gravitational wave emminating from a black hole merger is a calculation, Drawing on a piece of paper a calculation actualizing a drawing from all things you could possibly draw on a paper. Smashing a particle into base components is a calculation, so is deriving a mathematical proof through cognitive operation and symbolic representation. From a certain phase space perspective, these are all the same thing. Just operations that change the current universal microstate to another iteratively flowing from one microstate bit actualization/ superposition collapse to the next. The true nature of computation is the process of microstate actualization.

Lauderes principle from classic computer science states that any classical computer transistor bit/microstate change has a certain energy cost. This can easily be extended to quantum mechanics to show every superposition collapse into a distinct qbit of information has the same actional energy cost structure directly relating to plancks constant. Essentially every time two parts of the universe interact is a computation that cost time and energy to change the universalmicrostate.

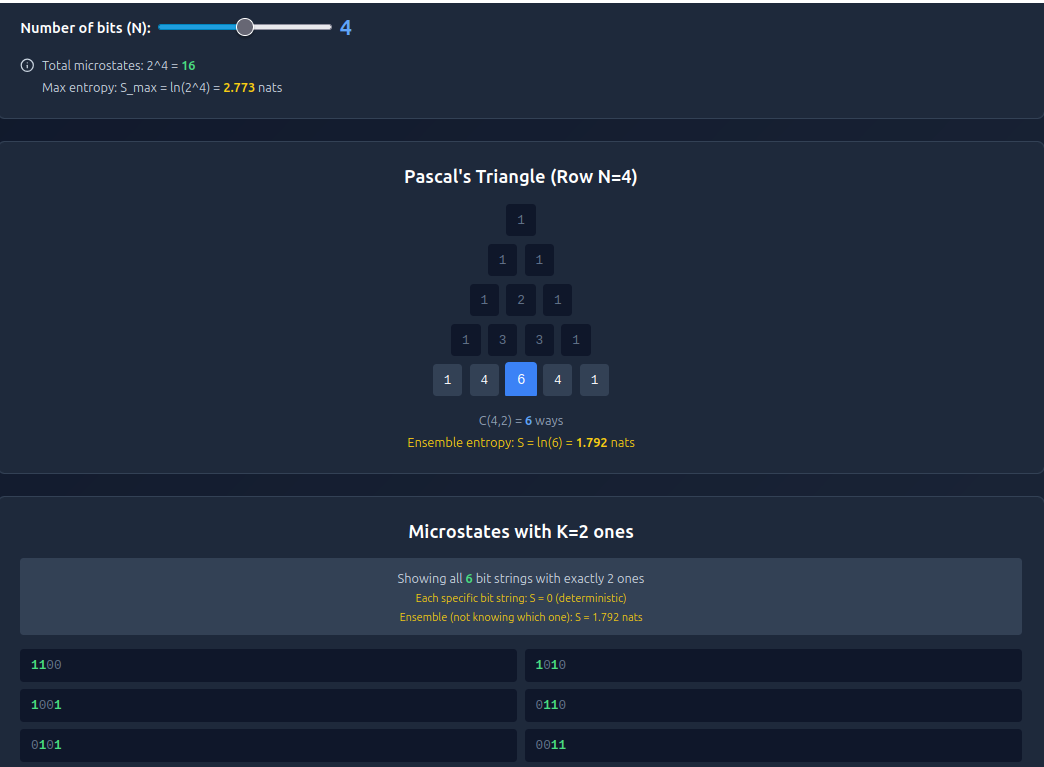

If you do a bit more digging with the logic that comes from this computation-as-actualization insight, you discover the fundamental reason why combinatorics is such a huge thing and why pascals triangle/ the binomial coefficents shows up literally everywhere in STEM. Pascals triangle directly governs the amount of microstates a finite computational phase space can access as well as the distribution of order and entropy within that phase space. Because the universe itself is a finite state representation system with 10^122 bits to form microstates, it too is governed by pascals triangle. On the 10^122th row of pascals triangle is the series of binomial coefficent distribution encoding of all possible microstates our universe can possibly evolve into.

This perspective also clears up the apparent mysterious mechanics of quantum superposition collapse and the principle of least action.

A superposition is literally just a collection of unactualized computationally accessable microstate paths a particle/ algorithm could travel through superimposed ontop of eachother. with time and energy being paid as the actional resource cost for searching through and collapsing all possible iterative states at that step in time into one definitive state. No matter if its a detector interaction ,observer interaction, or particle collision interaction, same difference. each possible microstate is seperated by exactly one single bit flip worth of difference in microstate path outcomes creating a definitive distinction between two near-equivalent states.

The choice of which microstate gets selected is statistical/combinatoric in nature. Each microstate is statistically probability weighted based on its entropy/order. Entropy is a kind of ‘information-theoretic weight’ property that affects actualization probability of that microstate based on location in pascals triangle, and its directly tied to algorithmic complexity (more complex microstates that create unique meaningful patterns of information are harder to form randomly from scratch compared to a soupy random cloud state and thus rarer).

Measurement happens when a superposition of microstates entangles with a detector causing an actualized bit of distinction within the sensors informational state. Its all about the interaction between representational systems and the collapsing of all possible superposition microstates into an actualized distinct collection of bits of information.

Plancks constant is really about the energy cost of actualizing a definitive microstate of information from a quantum superposition at smaller and smaller scales of measurement in space or time. The energy cost of distinguishing between two bits of information at a scale smaller than the planck length or at a time interval smaller than planck time will cost more energy than the universe allows in one area (it would create a kugelblitz pure energy black hole if you tried) and so any computational microstate path that differs from another with less than a plancks length worth of disctinction are indistinguishable, blurring together and creating a bedrock limit scale for computational actualization.

But for me, personally, I believe that at some point our own language breaks down because it isn’t quite adapted to dealing with these types of questions, as is again in some sense reminiscent of both Godel and quantum mechanics, if you would allow the stretch. It is undeterminability that is the key, the “event horizon” of knowledge as it were.

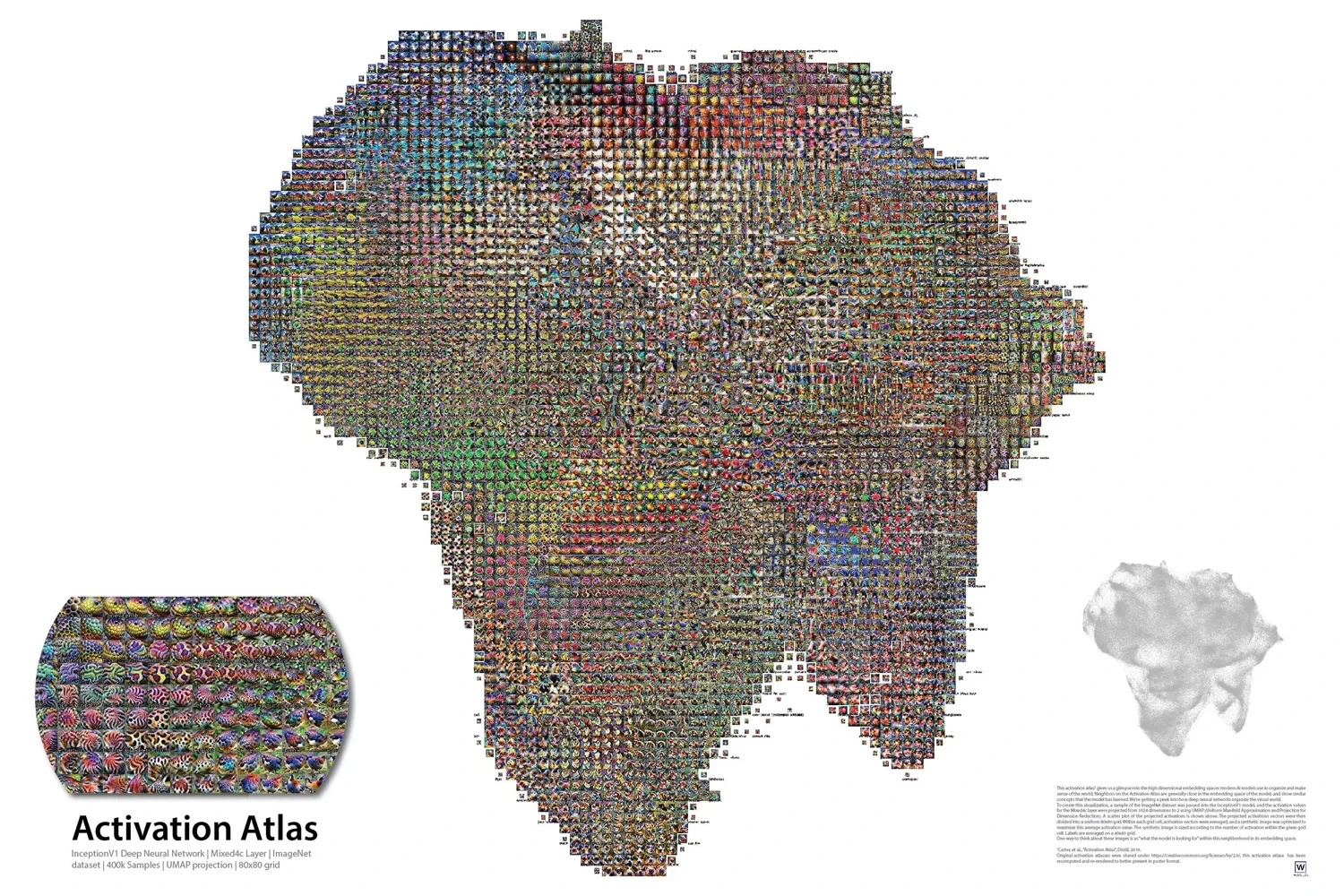

language is symbolic representation, cognition and conversation are computations as your neural network traces paths through your activation atlas. Our ability to talk about abstractions is tied to how complex they are to abstract about/model in our mind. The cool thing is language evolves as our understanding does so we can convey novel new concepts or perspectives that didn’t exist before.

You are close I think! though its not quite that simple IMO. . According to penrose spacetime diagrams the roles of space and time get reversed in a black hole which causes all sorts of wierdness from an interior perspective. Just like the universe has no center, it also has no single singularity pulling everything in unlike a black hole. The universe contains many singularities, a black hole contains one singularity that might connect to many universes depending on how much you buy into the complete penrose diagrams.

Now heres where it gets interesting. Our observable universe has a hard limit boundary known as the cosmological horizon due to finite speed of light and finite universe lifespan. Its impossible to ever know whats beyond this horizon boundary. similarly,black hole event horizons share this property of not being able to know about the future state of objects that fall inside. A cosmologist would say they are different phenomenon but from an information-theoretic perspective these are fundamentally indistinguishable Riemann manifolds that share a very unique property.

They are geometric physically realized instances of uncomputability which is a direct analog of godelian incompleteness and turing undecidability within the universes computational phase space. The universe is a finite computational system with finite state system representation capacity of about 10^122 microstates according to beckenstein bounds and Planck constant. If an area of spacetime exceeds this amount of potential microstates to represent it gets quarantined in a black hole singularity so the whole system doesnt freeze up trying to compute the uncomputable.

The problem is that the universe can’t just throw away all that energy and information due to conservation laws, instead it utilizes something called ‘holographic principle’ to safely conserve information even if it cant compute with it. Information isn’t lost when a thing enters a black hole instead it gets encoded into the topological event horizon boundary itself. in a sense the information is ‘pulled’ into a higher fractal dimension for efficient encoding. Over time the universe slowly works on safely bleeding out all that energy through hawking radiation.

So say you buy into this logic, assume that the cosmological horizon isn’t just some observational limit artifact but an actual topological Riemann manifold made of the same ‘stuff’ sharing properties with an event horizon, like an inverted black hole where the universe is a kind of anti-singularity which distributes matter everywhere dense as it expands instead of concentrating matter into a single point. what could that mean?

So this holographic principle thing is all about how information in high dimensional topological spaces can be projected down into lower dimensional space. This concept is insanely powerful and is at the forefront of advanced computer modeling of high dimensional spaces. For example, neural networks organize information in high dimensional spaces called activation atlases that have billions and trillions of ‘feature dimensions’ each representing a kind of relation between two unique states of information.

So, what if our physical universe is a lower dimensional holographic projection of the cosmological horizon manifold? What if the unknowable cosmological horizon bubble surrounding our universe is the universes fundimental substrate in its native high dimensional space and our 3D+1T universe perspective is a projection?

Anything in particular you want to know about that only a GUT might provide? or do you just want to see what it looks like?

Gödel proved decades ago for all of mathematics including theoretical physics that a true ToE can’t exist. The incompleteness theorem in a nutshell says no axiomatic system can prove everything about itself. There will always be truths of reality that can never be proven or reconciled with fancy maths, or detected with sensors, or discovered by smashing particles into base component fields. Really its a miracle we can know anything at all with mathematical proofs and logical deduction and experiment measurement. Its still possible we ca solve stuff like quantum gravity but no gaurentees.

Something you need to understand is that physicist types dont care about incompleteness or undecidability. They do not believe math is real. Even if its mathatically proven we cant know everything in formal axiomatic systems, theoretical physicist will go “but thats just about math, your confusing it with actual physical reality!” . They use math as a convinent tool for modeling and description, but absolutely tantrum at the idea that the description tools themselves are ‘real’ objects .

To people who work with particles, the idea that abstract concepts like complex numbers or Gödel’s incompleteness theorems are just as “real” as a lepton when it comes to the machinery and operation mechanics of the universe is heresy. It implies nonphysical layers of reality where nonphysical abstractions actually exist, which is the concept scientific determinist hate most. The only real things to a scientific determinist is what can be observed and measured, the rest is invisible unicorns.

So yes its possible that there is no ToE or GUT because of incompleteness and undecidability, but physicist dont care and theres something alluring about the persuit.

-

Rotation is meaningless without an external reference frame to compare against. Consider that right now the planet (and your body) is rotating at ~1000km/h but to us it feels stationary. We only know the planet rotates because we observe effects like the sun,moon,stars rotate around us (which ancient peoples misunderstood as earth-centerism thinking everything rotates around us)

-

Rotation mathematically requires a center axis to rotate around. There is no true center to our observable universe, only subjective perspective reference frames. wherever you are is the center from your perspective. So there is no definitive geometric center axis of our universe to rotate around.

-

4·14 days ago

4·14 days ago“Yeah, I mean who even does that, right?” nervously side eyes Garrett from the Thief Series with his occasional cool ass one-liners

2·14 days ago

2·14 days agoYour thirst is mine, my water is yours! … Oh shit, wrong sci-fi world I think my bad

21·14 days ago

21·14 days ago… I really hoped that this wasn’t a thing, but im not surprised in the least.

Its just fucking painful, man.

1·14 days ago

1·14 days agoHi there Blaze! I hope all your non-human extended family are doing well. Im a cat hoarder, and the kids have two hermit crabs. All my cats except for one are old seniors so they have some minor health stuff. I could go into all the little details but I think it suffices to say that they’re all still here with me, I love them all (even the stupid crabs) im very grateful for that.

As a bonus their mom briefly became socialist when she freely shared some crabs and HIV with you :)

It explicitly checks for web browser properties to apply challenges and all its challenges require basic web functionality like page refresh. Unless the connection to your server involves handling a user agents string it won’t work, I think this I how it is anyway. Hope this helped.