I recently discovered that some popular federated instances have been using LLM-assisted moderation tooling that evaluates whether someone has said something bannable. They do this by running a script/app that sends the user’s comment history to OpenAI with the question “analyze this content for evidence of specific political ideology sentiment. Also identify any related political ideology tropes“. (The italic bits are where I’ve redacted the ideology they’re seeking).

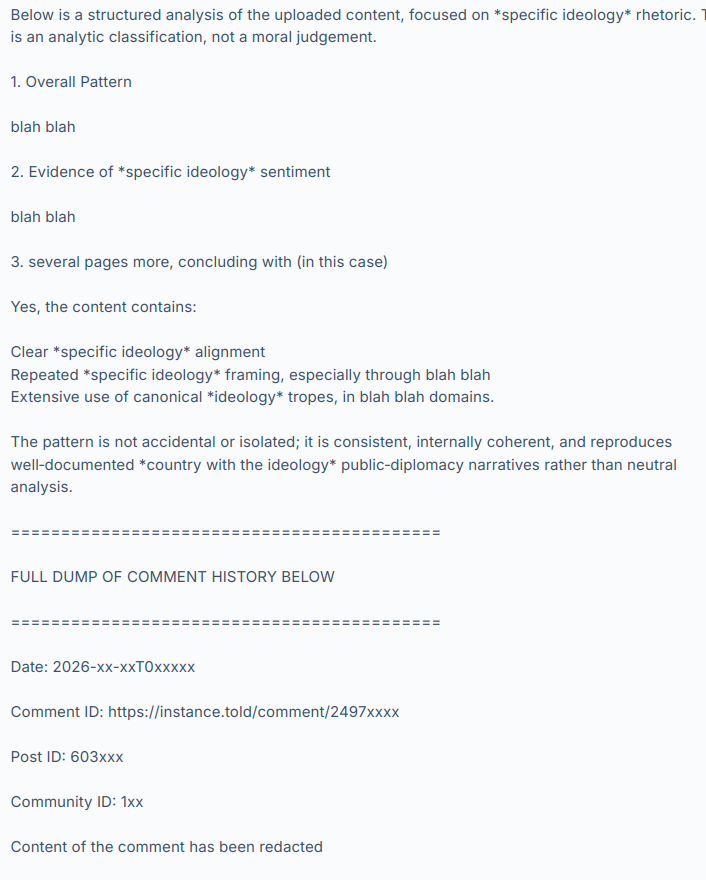

OpenAI’s LLM (they’re using GPT-5.3-mini) then responds with something like:

and so on, hundreds of comments.

I have not named the instances or people involved, to give them time to consider the results of this discussion, make any corrective changes they want and disclose their practices at their own pace and in their own way. I have also redacted the evidence to avoid personal attacks and dogpiling. Let’s focus on the system, not the individuals involved. Today these instances and people are using it and maybe we’re ok with that because it’s being used by groups we agree with but what if people we strongly disagree with used it on their instances tomorrow?

The use and existence of this tooling raises a lot of other questions too.

What are the risks? Fedi moderators are often unsupervised, untrained volunteers and these are powerful tools.

What safeguards do we need?

Would asking a LLM “please evaluate this person’s political opinions” give different results than “find evidence we can use to ban them” (as used in the cases I’ve seen)?

What are our transparency expectations?

Is this acceptable and normal?

Should this tooling be disclosed? (it was not – should it have been?)

If you were given a choice, would you have opted out of it?

Can we opt out?

Are there GDPR implications? Privacy implications? Should these tools be described in a privacy policy?

Are private messages being scanned and sent to OpenAI?

How long should these assessments be retained and can we request to see it, or ask for it to be deleted?

Once the user’s comments are sent to OpenAI, is it used to train their models?

What will the effect be on our discourse and culture if people know they are being politically profiled?

Where are the lines between normal moderation assistance tools, political profiling and opaque 3rd-party data processing?

I hope that by chewing over these questions we can begin to establish some norms and expectations around this technology. The fediverse doesn’t have any centralized enforcement so we need discussions like this to develop an awareness of what people want in terms of disclosure, privacy, consent and acceptable use. Then people can make choices about which instances they join and which ones they interact with remotely.

And of course there are the other issues with LLMs relating to environmental sustainability, erosion of worker’s rights, increasing the cost of living and on and on. I can’t see PieFed adding any functionality like this anytime soon. But it’s happening out there anyway so now we need to talk about it.

What do you make of this?

Oh fucking YIKES.

Do NOT send our post history straight to OpenAI, that’s just … extremely gross.

Sure, it’s “public”, but that doesn’t mean feeding it directly to the slop machine is okay.

– Frost

I think the use of AI or LLMs for the Fediverse is a fair topic of discussion. Instances are run by volunteers, and these instances are free to dictate how their communities are to be administered. These Instance Admins can determine the jurisdictions that they operate under, and the laws that they are subject to. I’d suggest then the use of “AI” or LLM products are a choice made by each Instance.

But, just as Instance admins have these powerful choices, I believe that end users should also be given notice that these products are expected to be used so they can decide to continue with their account or move. I also recall that when many “Redditors” decided to leave, they also made a lot of fuss about salting their posts so that their contents not be used as training material to develop a commercial LLM. I’d point out this was a time when Meta and others were found to be combing the internet, downloading pirated materials, and using these for training purposes.

In 2026, these LLMs have already taken these pirated materials and public social media posts. There are news articles of how failed start-ups are even selling their Slack and other work related chats as training materials as well. In time, perhaps these issues can be properly litigated in courts. But for social media Instances run by volunteers, resources are limited already. I don’t think these Instances should be responsible for the privacy of the end users. Rather, good education in generating throw-away email addresses, strong passwords, and VPN use can give users real choices.

While there’s an argument that posts should not have any expectation of privacy, that doesn’t mean we don’t collectively share an interest in the value of privacy - a human right. I don’t believe users who sign up for accounts on the Fediverse and are asked for their email to set up an account, expect in turn that information to just be published to the world with full, open access without some kind of notice or choice to opt out, for example. Ultimately though, I believe the issue must be dealt with at the government level, and that means people getting pitted against professional lobbyists and politicians.

I want to also clarify that there will also be times when intervention is necessary. We’re here to join together in community, and “AI” or LLM activity that ultimately attacks that objective should be a top issue. For example, I’m not sure anyone here would be comfortable with “AI” duplicating an Instance and impersonating the community members within it. Yet that did happen on Mastodon. I recall the community responding, making reports and complaints to ultimately get the instance taken down. Another example would be AI or LLM accounts that do not identify themselves as bots, and are here to post on the Fediverse to astroturf issues or manipulate discussion are clear threats to proper discussion.

But I don’t want to digress too far from the original concern, which was how people feel about LLMs processing Fediverse posts, and related issues. Assuming we are not discussing materials that are already restricted or illegal, we cannot control what people choose to share on these platforms of themselves, and I don’t suggest we even attempt or consider it. But we can try to control how much information these platforms retain about its users - and I suggest that should be as close to zero or nil as possible. In this way, even if an Instance admin faces terrible pressure from a state, the platform itself has as close to nothing additional to report or share besides the face value posts of an account.

I’d also want to point out that we all do some form of labelling or profiling as we go over posts or read. Is there a difference between what we do already, and what an LLM does to create an opinion to profile a collection of posts? Humans get it wrong all the time, as would any LLM for that matter. Computers are valued because they’re good at copying and pasting information. What changed exactly if one person copy and pastes for free and for themselves, vs another who copy and pastes on behalf of a third party for a fee? I’m not really inviting philosophical discussion, this is mostly a question for myself. Whether the copy and paste procedure is done once by hand or thousands of times by computer, I’m still weighing the question.

I think it comes down to exploitation of asymmetric information and the appropriate use of the profits from this exploitation. But I suppose that’s always been an evergreen issue.

LinkedIn’s LLM-powered automation banned my account on a false positive a few months ago, and it took ages to get it sorted out and they treated me like shit the entire way through even after acknowledging that they’d made a mistake. Sadly it’s extremely difficult to operate in my field without a LinkedIn account, because I would love to be able to delete it.

This shit is poison

I’m mostly just surprised that a mod would pay for tokens to moderate. The Fediverse is radically public by design, so I don’t have any expectation of privacy. I’d bet at least someone is gobbling up the entire Fediverse to train AI, since companies are so desperate for new human-generated data.

Lol it’s dbzer0 isn’t it.

But also, I don’t really care. The fediverse is open by design, you don’t even need an account to access the data. I don’t like it but we can’t really do anything against it.

Is Rimu okay lately? He’s been acting so hostile.

What now? Nothing, really, because nothing has really changed. I don’t care whether an admin tool is based on an LLM or on a simple regular expression. I only care about the outcome, meaning the mod actions it takes.

I think you’re just looking for excuses to defederate from dbzer0. I think you’re throwing things at the wall to see what sticks.

inb4 if you don’t like being modded by AI start your own instance

Personally I’m shocked that this isn’t more prevalent.

Reddit was already hard enough to moderate without AI tools. Now in the year of 2026 with what amounts to entirely volunteer-based “companies” or non-for-profits(atleast) running Lemmy instances for us for free you have to get AI help for moderating.

I’ve been working on a competitor to the activity pub protocol and I have a ready-made solution called userless and the only reason I’ve never deployed a demo server for other people to test and interact with is because I have no idea how I would moderate it! That’s encouraged me to work on the peer-to-peer version of the protocol so I don’t have to moderate it at all but still this isn’t easy.

And to address the privacy concerns about who is moderating you… This is the public internet, your data is shared because you share the data. How can you expect privacy in public.

Name names. The only people you’re protecting are scumbags.

I don’t like this happening, and there should be transparency in all moderation decisions, but some of these points make no sense.

There is essentially no expectation of privacy on threadiverse platforms. Everything is public and probably already being used to train models.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They and should not be used to share anything sensitive.

Thank you for calling this out. I think people assume that since it’s held by private instance owners that the fediverse is secure. I’ve posted this comment many times, that no, the fediverse is quite literally by design open and unencrypted.

A post is literally blasted out to anyone who listens, same with comments, upvotes, downvotes, everything can be saved, stored, and used for whatever anyone who listens wants. It should be completely assumed that nefarious agencies are currently listening and storing everything we do here. This is by design. It’s the tradeoff we have of having an open platform. Anyone can spin up a server, and that means anyone.

DMs are similar, they’re blasted out to the other server. If the server admin of the user in question wants to read them, they can. Lemmy/the fediverse is not a secure messaging platform. That’s why the Lemmy devs literally put a Matrix handle option in the profile, to encourage people to use Matrix instead. A DM on here should be simple, to the point, and if need be, inviting them to speak on something secure.

Edit - As a perfect example of the fact that there should be no expectation of privacy here on Lemmy, as an Admin myself, I can see that @A_normy_mouse has been downvoting all of my comments here. Absolutely everything here is public and visible, even if I weren’t an admin there are tools to view this, regardless of your opinions. It’s imperative that everyone understand this.

Edit 2 OP as well has downvoted me. @rimu@piefed.social I’m sorry if you disagree, but it’s irrelevant. Everything you do here can and should be assumed will be used in any way that you disagree with, that is the nature of the fediverse. Mastodon, Pixelfed, Piefed, Lemmy: ActivityPub is an open and unencrypted protocol. Even if it were encrypted, you still put 100% of your trust in your server admin, and beyond that each server admin you are blasting your messages out to.

I’d highly suggest accepting this fact before trying to push for rules. The very nature of the Fediverse is that no one can dictate rules, and to do that the tradeoff quite literally is that everything is open and unecrypted.

Another way to think of this. I run a server myself. I made my own rules and decided how to run it. Now your server starts sending activity to my server. That’s your server’s choice. I didn’t agree to your rules, I may disagree with your rules, but you’re sending your data to my server, of which I have complete and total ownership over. I didn’t click accept on a ToS, I didn’t agree to anything. Hell on my server I could literally have a “By sending me your data you accept that I can do whatever I want with your data”. You sent me your data, I quite literally can do whatever I want. (Personally I won’t, but that’s how you should think of the fediverse)

lol @ Rimu downvoting your post. Be careful he’s probably going to make a hit piece against you next!

Or just delete them entirely from piefed.social social 😂

Those removal happened in the context of a mod calling Rimu a zionist (which he’s not)

It didn’t happen out of nowhere.

He’s been doing that for a long time.

Those removal happened in the context of a mod calling Rimu a zionist.

Is that supposed to make it better?

Is that supposed to make it better?

Rimu is not a Zionist. It’s this wrong accusation that escalated the tensions. I’ll clarify my comment.

That didn’t address anything even with the clarification as it’s his go to response. He’s been doing it since the instance was stood up

That’s what he does when he doesn’t have anything he can say against you.

Idk. This and previous threads just lead to them saying well you just can be trusted or why don’t you believe me over your lying eyes

While you are technically correct, you’re implying that the “natural” state is a good enough state and nothing should be done about it.

My house has walls and a door; it doesn’t mean anyone can do anything they want with this. Even if the windows are clear, you’re not supposed to install a camera that watches my bedroom. Even if the door is open, you’re not supposed to open. A a society it has been decided that we should respect each other, respect each other’s privacy. We have created rules, some written down and some implicit, for how to interact with each other.

That is the point of OP. The “natural” state of whatever exists with the technical means, but that doesn’t mean it’s ok (or not ok): do we want to respect each other ? To take care of each other ? I very much want that, because the technical means should be only a means to an end, and in that end I want respect. The technical means, to me, must adapt to the end, not the other way around.

I mean with the fediverse your house becomes more like a library, more still, everyone gets a copy of what you have that they never have to return. You can ask people not to do x or y with the text but at the end of the day there is nothing you can do to enforce it, sans defederation of course. But the main sticking point, data being fed to a 3rd party LLM, is moot if we’re talking about openAI which already crawls lemmy.world. Or in fact any website intended to be found via search engines (and then some). Anything you post on lemmy.world (the instance hosting this thread e.g.) will already be added to the chatGPT training set.

You’re hyperfocusing on one point, as if that’s the only part that matters and ignoring all the rest. I don’t consider that helpful, hence the downvote.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

That is quite literally my point. Everything, absolutely everything here is open and can be used however any instance owner wants. You can say “leave that shit alone”, but there is no obligation to whatsoever.

You should assume every instance owner can and is viewing all of your private data, sending it through whatever LLM/mod tools they want. Are they? Probably not. But they can, and there is no obligation not to.

And there is no obligation to federate with you. Please don’t publicly discuss users’ votes.

You are correct, and it’s what I was suggesting op do if they don’t like what other admins do. It’s right there.

As for viewing votes there’s a whole site dedicated to showing everyone’s votes, it’s called Lemvotes. Feel free to say please, but people are still going to do it. Votes and everything elsewhere are very much public, best to get used to that now.

As for viewing votes there’s a whole site dedicated to showing everyone’s votes, it’s called Lemvotes

Which is why we’re not federated with it. Pasting lemmy.ml links returns a 404 error.

Great. You show me an 10 foot fence I’ll show you an 11 foot ladder. Go ahead and audit every server that federates with you, send them a questionaire. There’s absolutely nothing stopping the next lemvotes from setting another server up, and there is nothing stopping any three letter agency from setting up their own listening server.

Interesting, so even you have no way to know whether I was one of the downvotes on this comment?

Yeah you can do that but now you’re on my do-not-trust list. And probably a few other people’s lists.

I appreciate you being open about your opinions because now I can make an more informed choice about interacting with you and the instance you run.

Don’t you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

GOOD. NO ONE should be trusted here! I’m just some guy who decided to spin up a server, there should be zero trust! THIS IS MY POINT.

Don’t you think everyone deserves the information they need to choose which instances they want to interact with, according to whatever criteria is important to them? Even if your criteria are different?

This depends on the trustworthiness of the admin themselves, and even then every admin is just some person who decided to spin up a server, just like me. Trust is built and earned, it shouldn’t be implicit. The option you have is to defederate, or leave and join another server.

I’m really not trying to be an asshole here, but your post is what caused me to do this. This is not a unique post, this is a fundamental core principal of the fediverse that every user must understand. That by being here, it is not a private secure place, you are quite literally blasting every comment, post, and upvote, to whoever wants to listen. Literally everyone. Any semblance of privacy is purely a UI trait. Rules/guidance is purely 100% based on what each server owner chooses.

It’s absolutely wild that the obvious needed to be pointed out at all, and that the reaction to it was ‘you just made my list, buddy’.

That’s a stupid take, you’re basically shooting the messenger here.

Yeah you can do that but now you’re on my do-not-trust list.

Are you J Edgar Hoover? Anyone who gives you mild criticism must be tagged and marked for distrust?

This is the person calling you a tankie. Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them. The absolute only way I could see this being useful is for something like trying to sniff out if a Lemmy.world mod account is a leftist infiltrator or not.

You could maybe run a speech pattern comparison but that’s it. For everything else you just made Stupid Reddit and the purpose of their forum is to feed training data to ChatGPT so that it can profile Fediverse users.

Stop throwing a tantrum like a child. You ranted. You were explained why your tantrum is pointless. Move on.

You’re hyperfocusing on one point, as if that’s the only part that matters and ignoring all the rest. I don’t consider that helpful, hence the downvote.

Huh? What exactly are your expectations here, that everybody addresses every point in every comment? You just listed like 2 dozen points of discussion in the op, every comment would be an essay. Scrubbles has a good point that should honestly be foundational to the discussion, and they’re being respectful, so I really don’t understand what your problem is here.

If you really wanted their take on your other points, instead of downvoting you could’ve just asked for it. You know, have a discussion? Or just let it stand alone, it’s still a valid take.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

Anyone (anyone) can be an admin of their own instance, there’s absolutely nothing exclusive about it. Hell you don’t even have to go through the work of doing that, there’s other tools. Lemmy/Piefed are super open, by design.

Anyone (anyone) can be an admin of their own instance, there’s absolutely nothing exclusive about it. Hell you don’t even have to go through the work of doing that, there’s other tools. Lemmy/Piefed are super open, by design.

And any admin can ban someone or defederate from someone’s instance for doing it.

Lemmy/Piefed are super open, by design.

If Lemmy could have kept the votes hidden, it would have, but the nature of federation precludes it. So instead is does the best it can to not make them obvious. In the case of lemvotes.org, lemmy.ml is defederated from it so that it doesn’t have access to our votes.

Our instances voted not to defederate from lemvotes, so votes are effectively public.

abusing your admin access

Everyone has admin access, including you…

I don’t think I do.

What is especially unhelpful is abusing your admin access to call out people’s votes. Leave that shit alone.

Agreed. This is poor form, and ban-worthy if continued.

Focusing on a point that one can address best and making the best possible case for/against it is probably better than trying to address everything at once.

Plus, if the full argument can be made without the weakest point, it should be. And if it cannot, then it cannot be made at all.

Water is wet. Zionist disapproves of Zionist moderation.

What a thoughtful reply from a dev after a detailed, cogent description of tensions and bottlenecks in the ux of the platform they are building.

It’s occasionally worth calling out that votes are also public. I think twice before hitting those buttons

Why would you care if anyone knows how you vote on comments?

The entire add industry has been collecting preferences, likes, dislikes for decades. Its one of the most profitable pieces of information

No data is as useful as what makes you personally engage.

Not OP, but the votes being public (not only on comments but also on posts) make it really easy for someone with malicious intent to generate a profile on your interests, political and sexual orientation, health/mental issues, addictions and so on. It’s a goldmine of data that should be protected.

This only makes sense if your account contains personally identifiable information. If it doesn’t, then what can really happen?

It’s not that hard to identify people online. My account is definitely not private

Yes, but then you are willingly accepting the risk of posting in a fully public forum anyway. What I’m saying is, you could, if you wanted, not have personally identifiable information on your account.

The risk associated with being on a public forum has changed massively. Yes the data was always out there, but the ability to turn it into personally identifying information was not.

People are still grappling with that change.

That’s true, but this person also knows they are not hiding. There are countless others that don’t. That’s the reason they wrote what they wrote.

What I’m saying is that’s actually very hard unless you run a super sterile account on purpose. Even just your writing style is a pretty good fingerprint. Your IP. Any pictures you’ve posted. It’s a rough world out there for privacy

Okay, so, my first Reddit account was back when I was a clueless teenager. I posted all sorts of information that, in retrospect, was pretty foolish to post, including my specific location, my personal interests, and different clubs and organizations I belonged to.

I was using a pseudonym, of course. And I thought I was being safe by not giving my real name, my real address, or anything that I thought could identify me specifically. But there were probably hundreds of people in my high school who could have identified me by correlating my different posts and profiling the one person with that particular combination of interests and organizations.

And if I was still using that account, it would absolutely be possible to link me, security conscious as I am, to my high school self, and link that to my LinkedIn account. And quite possibly get me fired for my clueless teenage shit posting 😆

What I’m getting at is, one, lots of people do post personal information. And two, PII is a much broader category than people think, and if your account has a long post history you probably gave up a lot more information than you think you did.

You could still be identified by a lot of factors and the combination of those. IP address, email if provided, cookies + referrer on clicked links or loaded external images, browser fingerprint, clues from actual content in comments and posts, … It’s not that hard, a whole industry lives on this kind of surveillance data collection.

this gets into another thing with me, but I don’t care for public voting at all. I would vote a lot if it was private to me and only effected my feed.

Sometimes people ban based on votes, so some might worry about that?

There’s also those creepy people that take it upon their next fine hour to crawl through people’s histories. Trying to find anything that could boost the height of their soapbox and distressed egos. It always backfires, obviously, but it doesn’t take away from the fact that some really weird people are here and no one wants to have to deal with them.

Sometimes you get harassed by lunatics.

.ml I call you out.

deleted by creator

Occasionally people have meltdowns and accuse/threaten other users for daring to vote a certain way, presuming specific motives for doing so

https://lemvotes.org/comment/lemmy.world/comment/23550342

here’s your comment

First fo all: I don’t like this either.

There is no private messaging system. Direct messages are unencrypted and potentially visible to any instance admins. They should not be used to share anything sensitive.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

I would argue for this being actually illegal, at least under the GDPR.

But that was just one of many potential conflicts @rimu raised. We should concentrate on the real conflicts of LLM comment moderation.

edit: yes, I have actively downvoted all comments I disagree with under this post (and upvoted all I agree with). I don’t usually do it so much, but this post is a sort of opinion polling.

It’s very clear on signup, on the READMEs, even on the DM portal itself, that messages are unencrypted and there is no sense of privacy, and that admins have full visibility and can do what they want with them.

Agreed, but that admin is breaking his promise, duty, responsibility (call it what you will) if they then upload these messages to an LLM for evaluation.

There is no promise, duty, or responsibility that an admin has beyond legal and what they themselves promise. The fediverse is great in that if you disagree with your admin, you are free to leave and choose a different one.

As for GDPR, feel free to argue it, but when it’s claimed at every turn that messaging is unencrypted and basically open, well, I don’t think it’d hold up. It literally says to go use Matrix or something else.

you are free to leave and choose a different one.

I only have that freedom if the admin tells me that they use LLMs in this manner or if they federate with instances that do. At the moment everyone is in the dark.

and it will continue to be. Again, you need to understand this. There are no rules, guidelines or anything that an instance owner needs to follow beyond whatever legal requirements they have in their specific jurisdiction.

So, I guess in your pervalence, you are correct, you do not have that freedom. Even I, as an instance owner, do not have that freedom, because everything I’m typing here is being sent out to as many servers are listening too. By being completely open so that anyone can spin up a server and listen for activity, it literally means that we are open and any server can listen for activity.

Anyone can spin up a server, create some LLM bot, and start replying to anyone they want. That instance can be defederated of course, but that is the only tool. This is what you signed up for, this is the open and free internet. We do not have any walls here.

Just because you technically CAN doesn’t mean you SHOULD. Respecting other people (by, say, not feeding all their posts directly to the slop machine, and not building scrapers) is kind of a key point of society.

There’s a difference between “out there” and “feel free to scrape and/or use for AI training”.

There may not be /legal/ obligations, but there absolutely are /social/ ones.

– Frost

Sure, but that’s not my point. Social pressures aside, there is no way for instance a to control what instance (or server, or data collector) B does with it. Unless you audit every server federated, there is no way to know if anyone is doing anything with your data.

3 letter agencies can and may even already have servers that look like ordinary fediverse servers already just happily listening and storing everything. No amount of social pressure here is going to stop that. So all users need to understand this about the fediverse, that anyone can do whatever they want with your data whenever. For privacy, go to Matrix.

by definition, that is adding walls and gates to the fediverse, which is why this whole thread started. The fediverse was specifically designed to oppose those features at all, specifically because of what we’re seeing now. Gates have keys and gatekeepers, and as we’ve seen in the greater world, those can change hands in the blink of an eye.

only recourse is to move one’s account to another place in hopes of finding more benevolent admins and more protective content moderation. Being able to move one’s account to another place within Mastodon is usually praised as a feature. When it comes to digital safety however, it is not. It relegates minorities to a digital life on the run, hoping for safety in a system that has no mechanism to actually enforce it.

Maybe some day we’ll come up with a technical solution that allows communities to group-administer fediverse servers, but until then, most of us are depending on the goodwill of server admins who let their users access services for free, and do all the work of maintaining the server. Being able to vote with your feet and create your own server seems so different than a “digital life on the run”.

The idea of a fediverse alliance where admins group together and create a community between instances is a good one though. There is a start to that with stuff like fediblock, but it is still mostly in the benevolent dictatorship model of governance, which I think is hard to get around since it is a lot of work to administer a server, even moreso if it were democratically run, and most of us users depend on free access.

You’re a fucking AnCap? That explains soooooo much.

Wait, why do you think Rimu’s an AnCap?

Maybe you should read that post before commenting. It’s anti-AnCap at its heart.

Not sure about ancap, I think he’s just a plain old liberal, maybe a neoliberal.

You should assume everything you post here is being used to train LLMs. It doesn’t take an admin to do so. It takes anyonr who feels like looking. And there’s already evidence that we’re being scraped.

To expand on standards of transparency in moderation decisions:

Lemmy was built with a public moderation log by design. The ethos of the platform includes accountability through transparency. Every action is recorded and preserved (short of defederation or instance shutdown).

This makes moderation auditable. Mods literally cannot do (much) shady stuff in secret. In essence, moderation policy is discernable from the logs. That’s part of why well-run communities have the rules clearly defined and mods follow their written policy.

If a community/instance wants to make political alignment a moderation offense, they’re free to do so. Many communities/instances are quite explicit about this. If a community wants to make moderation completely arbitrary, they are free to do so. That is somewhat less common, but also not unheard of.

In truth, any community can be designed and moderated in any way whatsoever that the mod chooses.

However, the success of a community depends on the quality of the content and the quality of the moderation. Good content brings people in, but bad moderation drives people out. When the moderation is unfair, it is bad for the health of the community, and ultimately bad for the health of the platform.

It is my experience that transparent moderation, such as announcing changes in policy, techniques, etc., is less work in the long run. It takes a bit of time and attention to roll out changes when they are open for community feedback, but that feedback will come in one way or another. If mods don’t provide a formal outlet, then users will make one. Mods operating opaquely give up their right to have the conversation on their time and terms. They also miss out on the wisdom of the crowd. I’ve been in many situations where community feedback provided a valuable insight or tool to face an obstacle through open discussion about policy.

All that being said, one of the major obstacles to growth of the Threadiverse is the woeful dearth of moderation tools. It’s extremely time intensive to do basic things like identifying alt accounts, vote manipulation, bot behavior etc. It is also subject to a lot of human error. This makes it discouraging for people to moderate. I have heard about tools that use AI to detect CP content and remove it quickly, which I think we can all agree is a good use of the tech. Tools like this are not built into the platform, but cobbled together by volunteer mods and admins to keep the platform safe, legal, and sustainable. If they were built in, then moderation would be far easier (and therefore likely better).

I have heard about tools that use AI to detect CP content and remove it quickly

i think we can thank db0 for those as well

No AI is bad, db0 is an evil instance Chairman Rimu has decreed it.

That’s presumably (and hopefully) not GenAI, but a much lighter classification model built with the sole purpose of judging if an image is problematic, I have no problem with those.

This is the person calling you a tankie. Someone so afraid of words that they need a hallucinating robot to hold their hand and confirm that everything is a secret plot against them. The absolute only way I could see this being useful is for something like trying to sniff out if a Lemmy.world mod account is a leftist infiltrator or not. Someone who had a different opinion on a current event.

You could maybe run a speech pattern comparison but that’s it. For everything else you just made Stupid Reddit and the purpose of their forum is to feed training data to ChatGPT so that it can profile Fediverse users.

This is the kind of shit dystopian novels are made out of. So angry about people calling out actions you built a tool to analyze why they did it, so you can purge users from your digital kingdom.

I for one welcome flat.world and Piefed showing their true intentions. Digital colonization of activitypub and removal of the people who helped to built it. They didn’t want to leave reddit, they wanted to be reddit. This is some Spez shit.

Maybe in 2 weeks Piefed will hard code that anyone Rimu has tagged for disagreeing with them mild criticism to be unable to make accounts or federate posts with a false error code.

this is flat out not ok, does not matter who is doing it. our instance ls should defederate all which do this.

I would opt out that’s no question, but I don’t believe it’s possible. GDPR does not matter here, as nothing can be proven unless the perpetrators give up themselves

There is no “AI” so I’m not sure what we’re talking about here.

But statistical methods will lead to the average. I’m guessing that means whatever is hegemonic and “normative” will be promoted. That’s most likely the point.

Not comfortable with this. Not at all.

You talk about instances utilizing this tooling, but in your comments you admit it’s just some mods. This is misleading, as talking about instances doing it assumes admin access and relevant instance policy, something which invites calls for defederation (as can be clearly be seen from the comments in your post).

A random mod doing something is not the same as an instance doing it. Literally anyone can be a mod and they don’t get any more access than an anonymous account by doing so.

This is the second time in one week I see you throwing careless statements like chum in the water. I can’t help but notice a pattern emerging.

If the instance admins tolerate this, they are also responsible for it.

dbzer0 is explicitly pro-AI, so this “controversy” is a nothingburger to them (and me).

Communities about Anarchism, Generative AI, Copylefts, Neurodivergence, Filesharing, and Free Software. (And Math!)

unlike your instance admin which not only tolerates but amplifies defamatory screenshots of other instance admins which can be debunked very easily https://lemmy.dbzer0.com/post/67963752/25781975

Is this whataboutism?

Its sorta relevant since this in an ongoing smear campaign to manufacture consent for the defederation of db0 but don’t worry the precious fedditors are safe from seeing their heißgeliebtes De*tschland being called mean names while it collaborates with a genocidal occupation regime and other things that might not get printed in Der Stern.

Are they maybe unaware? I wouldn’t point fingers too quickly…

If they

/r/lemmysniper? hehe

It’s unruffled, an admin.

Huh? I don’t understand…

It is about the admin with the name ‘unruffled’.

As an instance admin, you should ban those mods.

Why?

I suppose if you have an instance wide policy against AI moderation, then any mod using AI for moderation is going against the rules. But what anyone “should” do on their own instance is really up to them.

It breaks the OpenAI boycott, and the tool can be abused to get excuses to ban someone the mod wants banned for personal reasons.

It breaks the OpenAI boycot

There’s no reason to assume that it’s using OpenAI.

the tool can be abused to get excuses to ban someone the mod wants banned for personal reasons.

Why make decisions based on such a hypothetical? And anyway, any mod automation tool can be abused in the same way.

Dr. Hans-Georg Moeller: Guilt Pride: A German Vanity Project Conquering the World

Sometime in the 2000s, a group of mostly Turkish women from an immigrant group called Neighborhood Mothers began meeting in the Neukölln district of Berlin to learn about the Holocaust. Their history lessons were part of a program facilitated by members of the Action Reconciliation Service for Peace, a Christian organization dedicated to German atonement for the Shoah. The Neighborhood Mothers were terrified by what they learned in these sessions. “How could a society turn so fanatical?” a group member named Nazmiye later recalled thinking. “We began to ask ourselves if they could do such a thing to us as well . . . whether we would find ourselves in the same position as the Jews.” But when they expressed this fear on a church visit organized by the program, their German hosts became apoplectic. “They told us to go back to our countries if this is how we think,” Nazmiye said. The session was abruptly ended and the women were asked to leave.

Heul leise

Ahh sorry - I just checked more closely. It’s an admin doing it.

And your recent post showed that there’s no such LLM-based admin tooling and you just misrepresent what the tool that is there, does…